| Home | Papers | Reports | Projects | Code Fragments | Dissertations | Presentations | Posters | Proposals | Lectures given | Course notes |

The Explosion of Data Sizes in Biomedical Sciences and the Need for Proper Size Reduction and Visualization

Werner Van Belle1* - werner@yellowcouch.org, werner.van.belle@gmail.com

1- Yellowcouch;

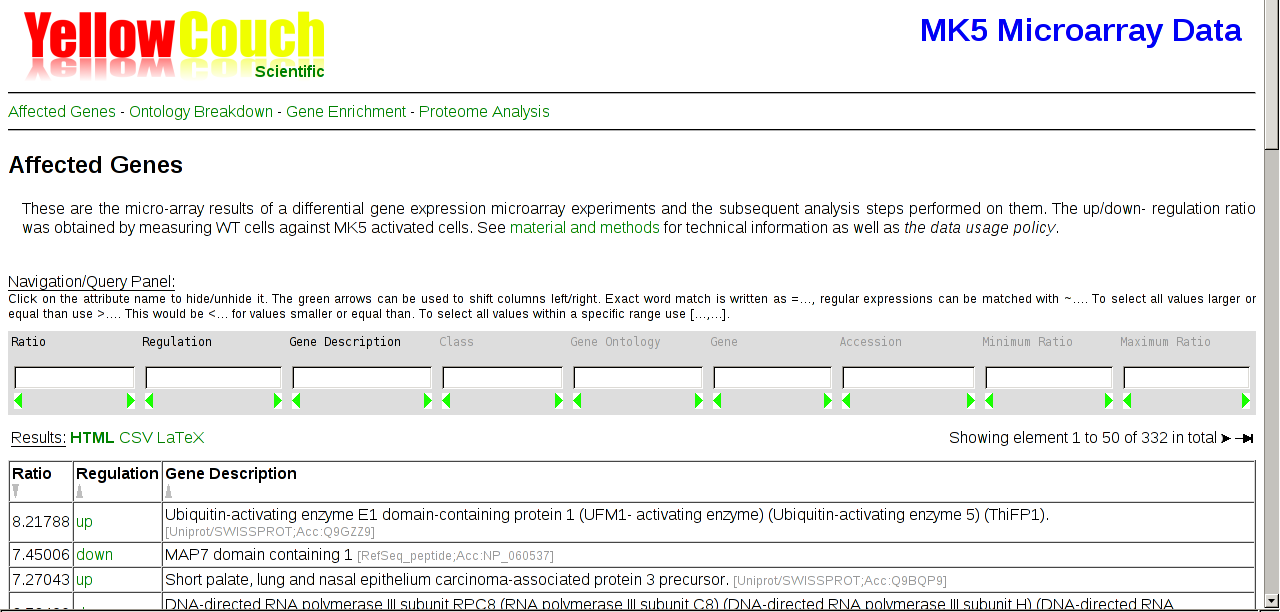

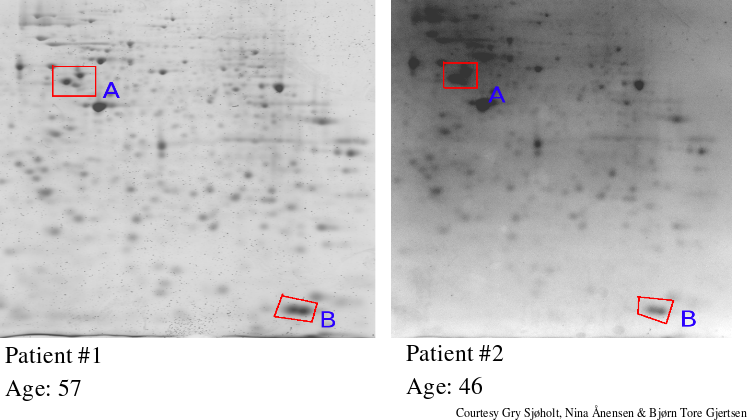

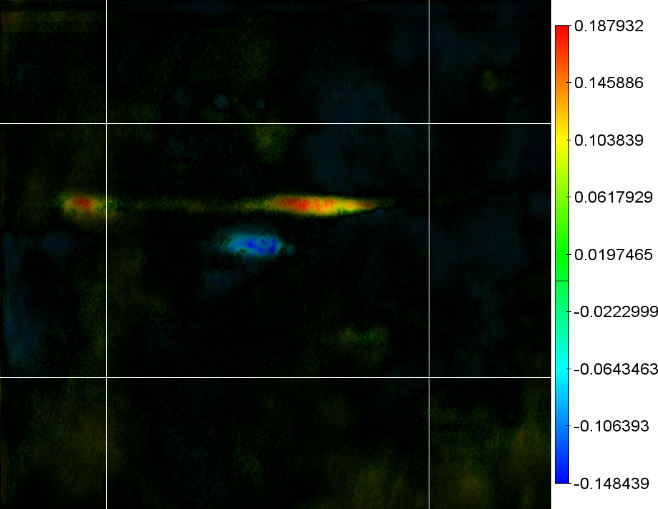

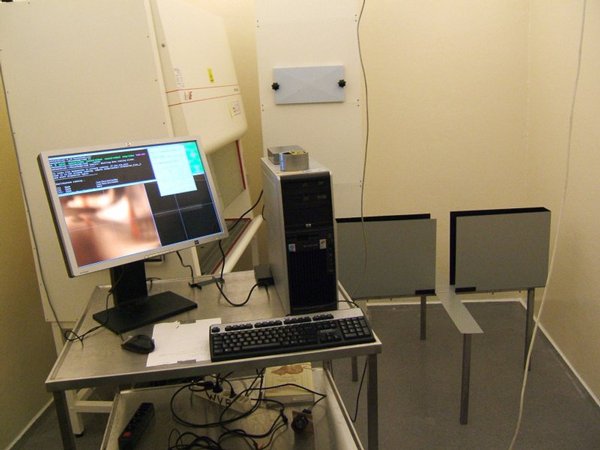

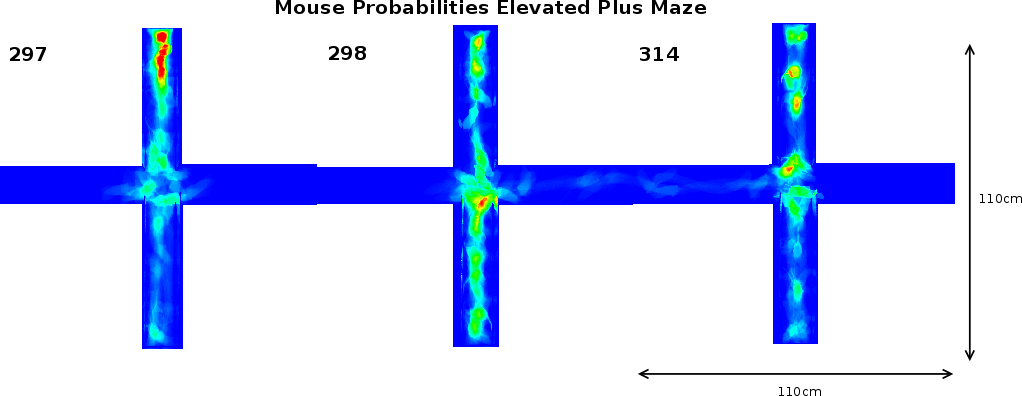

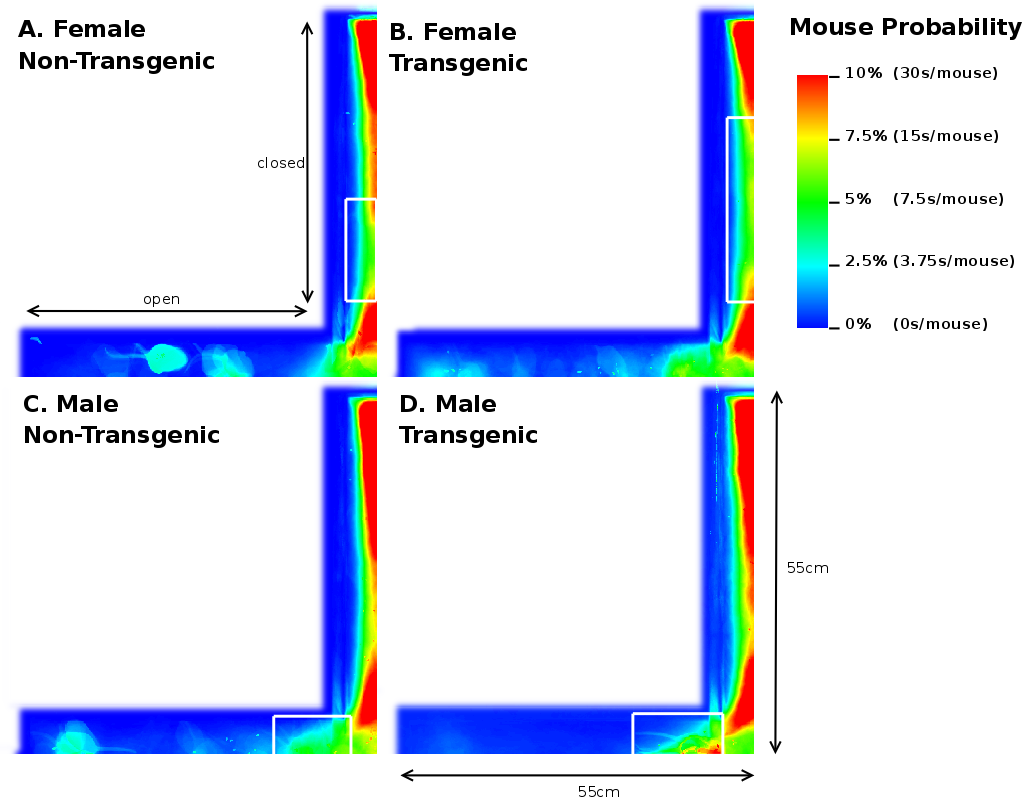

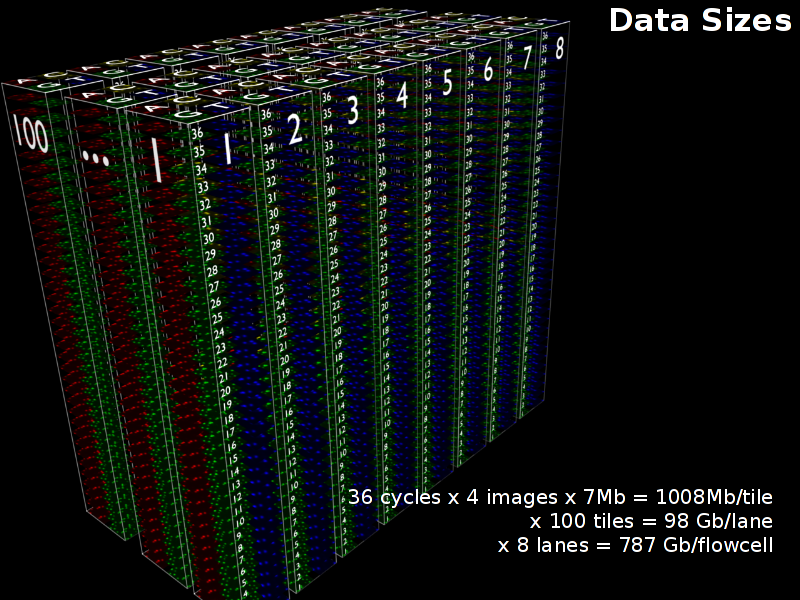

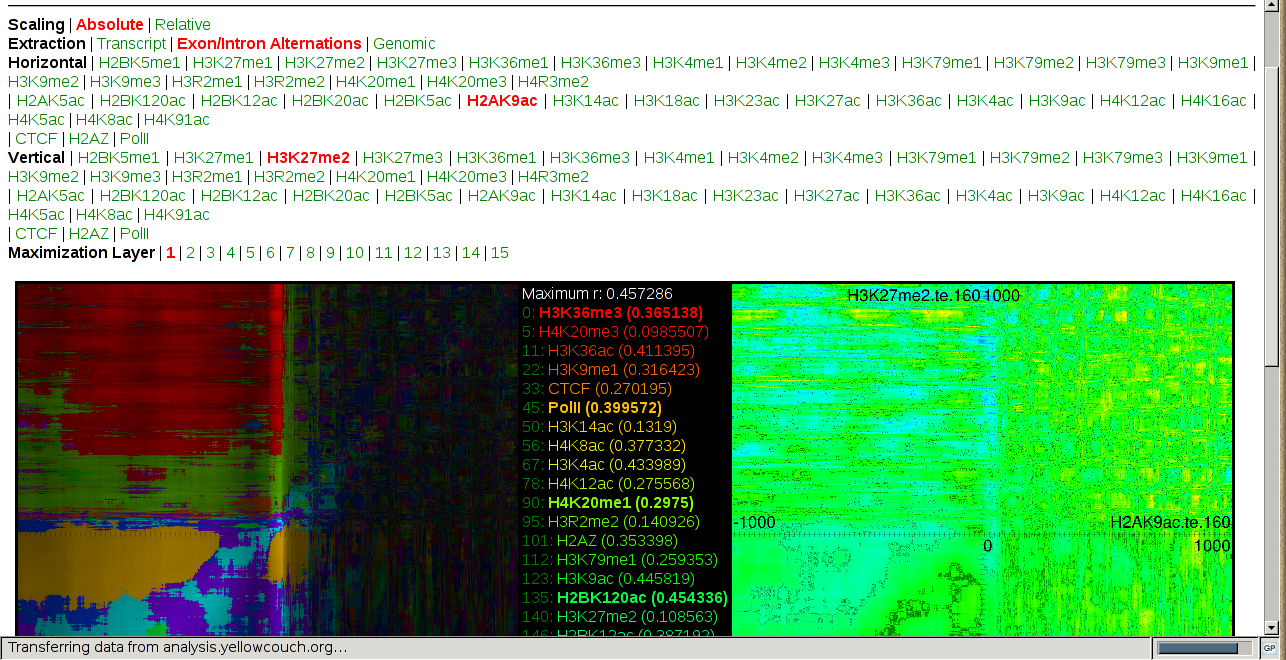

Abstract : Data sizes in biomedical sciences grow larger each year; while a microarray experiment in 2003 produced maybe 20kilobytes of data, a 2D gel analysis in 2004 produced 140Mb data, an analysis of mice tracking produced 40 gigabytes in 2006 and a single deep sequencing experiment produces around 1.5 Tb. To then further correlate multiple deep sequencing tracks, one is stuck with 40 terrabytes. Although we very likely reached some form of limit to this exponential ghrowth, the current state is problematic for researchers with and without IT background. To deal with this problem data integration and visualization tools are becoming increasingly important.

Keywords:

data sizes data size reduction data anlysis date visulization

Reference:

Werner Van Belle; The Explosion of Data Sizes in Biomedical Sciences and the Need for Proper Size Reduction and Visualization; Presented at ExistenzGründer Seminar; Lörrach; Germany; Germany; June 2009

| http://werner.yellowcouch.org/ werner@yellowcouch.org |  |